How to Vet a Software Vendor’s Dev Team Before Signing

A couple of years back we took over a Web3 project from another vendor. Their codebase was technically unsalvageable. Significant tech debt. Mock-ups mixed with production code. No way to tell what actually worked. The client mentioned later that the previous vendor had been hard to communicate with from week one.

None of those problems were invisible at the start. They just hadn’t been tested for.

Most buyers vet vendor teams on the wrong signals: years of experience, stack lists, polished sample architectures. I asked our CTO and five senior developers what to look for instead. This article is based on what they said.

One caveat before we dive in: a strong team isn’t just “great developers.” On their own, even great developers almost never deliver a successful project. The team builds the wrong thing, scope bloats, tech debt piles up, decisions take weeks instead of hours, the market shifts under you – any of these sinks a project before code itself becomes the issue.

That’s why we don’t run a team without a product owner. The PO keeps business goals, scope, and market reality in front of the team – but the team has to grasp them too. Also, good engineers don’t wait for someone to translate the business for them; they ask, they understand, and they make calls accordingly. A team with both – a strong PO and engineers who own the business context – is what carries even the most complicated products to the finish line.

We’ve taken over enough struggling projects to know what happens without that role: capable developers in trouble because product, business, and technical decisions stopped talking to each other. The rest of this article focuses on the engineering side – how to spot a dev team that thinks before it codes, asks the right questions, and keeps the project aligned when pressure hits.

Key Points

|

What to Verify When Vetting a Software Vendor’s Dev Team

What you actually need to know about a vendor’s team is whether the specific people they put on your project can think their way through your specific problem. Past credentials and case studies don’t answer that.

This isn’t just our opinion. The research behind Mark Murphy’s Hiring for Attitude tracked 20,000 new hires at Leadership IQ over three years and found that 46% failed within 18 months. Only 11% of those failures came down to insufficient technical skill. The other 89% failed on attitude dimensions: coachability, emotional intelligence, motivation, and temperament. Skills, in other words, are usually the easy part to verify, and rarely the part that breaks.

The same logic applies to vendor selection. You can verify a vendor’s technical skills in plenty of ways: sample architectures, code samples, certifications, or a small paid evaluation project. What’s harder, and more important, is verifying whether the team you’re about to engage will take feedback well, surface bad news early, push back on things that don’t make sense, and stay engaged when the project gets hard. Those are attitude tests, not skill tests.

According to Kacper, the cleanest way to draw the line is this:

- How to write it is junior territory.

- How to design it (so the system stays scalable, secure, performant, and at the same time not over-engineered for what the business actually needs) is senior territory.

Concretely, that means judgment and the ability to understand the business context and ask appropriate questions. Trade-off reasoning. The ability to explain the why behind a technical choice. Honest reflection on past mistakes. This is the lens our CTO and five of our senior developers – asked independently – told me they would use when evaluating the specific engineers a vendor puts forward. They’re all from PC, though, so take it as how we look at this in-house, not as a universal rule.

Who to Interview on the Vendor’s Team (and Why)

The standard play is to talk to the Tech Lead. The Tech Lead gives you the team’s architectural thinking, delivery process, quality bar, and how they handle conflict with the business. Because turnover is a reality in this industry, you also need the Tech Lead (or Engineering Manager) to walk you through their hiring and onboarding processes. If a developer leaves, how do they ensure the replacement isn’t just a random hire off the street who loses all project context? You also need to verify allocation: are these engineers dedicated full-time, or are you getting fractional attention?

But you also should talk to a Senior Developer.

Here is the catch: a strong vendor usually won’t guarantee specific developers before the contract is signed. Available engineers get assigned to whichever client signs first. If a vendor eagerly promises you specific names upfront, it’s often a sign of desperation (a bloated bench) or a classic bait-and-switch: guaranteeing their stars during sales, only to swap them out once the contract is signed.

So why interview a Senior Dev if they might not end up on your project? Because you are sampling the vendor’s talent bar. A Senior Dev gives you hands-on judgment patterns: how they engage with a real problem, whether they ask clarifying questions before jumping to a recommendation, and whether they can name the trade-offs of decisions they’ve made. This tells you what kind of people their recruitment process actually produces.

Other vendor-side people you meet during sales (account managers, sales engineers, sometimes a product person) can add useful context, but they’re not where the decision-grade signal lives. Anchor your decision on the Tech Lead’s process and the Senior Dev’s caliber.

How to Interview a Vendor’s Tech Lead

The questions below come from our CTO Marcin’s checklist for vetting an external Tech Lead, with one drawn from our internal interview guide for senior technical hires. Each one tests for something specific, and almost none of them tests for technical knowledge directly. The structure of the answer matters more than the content. You’re listening for concretes, named processes, and self-awareness.

- “How will I know if something goes wrong? Walk me through a real example from a past project.” This tests their transparency, specifically around bad news. If you just ask what metrics they track, you’ll get a rehearsed pitch from vendors with slick slide decks. Asking for a past example forces them to deal in facts. You want to hear about a specific instance: what went wrong, when it surfaced, who spotted it first, and how they broke the news to the client. Marcin warns against the generic “we’ll let you know as soon as an issue pops up” response. If they can’t give you an example, they probably hide problems until it’s too late. A Tech Lead who can openly discuss a past crisis is much more likely to be honest with you when things get tough.

- “Tell me about a time your team was assigned something that didn’t make sense to them. What happened?” This tests whether your engineers actually engage with the work or just execute it. The question is intentionally open. You’re not telegraphing what kind of “didn’t make sense” you’re after (business value, technical approach, scope, priority); the Tech Lead has to surface it from real memory. Listen for a specific story: who flagged the issue, what got said, what changed (or didn’t), and what the Tech Lead did about it. Strong answers describe pushback, clarifying questions to the client or PO, and a resolution that came from real conversation. The weak answer is generic: “our team is generally engaged” or “we make sure everyone understands the why.” No specific instance means no engagement to verify. A team that never questions the work won’t catch you when the brief itself turns out to be wrong.

- “Suppose three months in I’m unhappy with how this is going. What would be the three most likely reasons?” Tests honesty, experience, and accountability in one move. You want to hear a real, named pattern from past experience and what they learned from it. The answers Marcin specifically warns against are “there’s no scenario where you’d be unhappy” and anything that shifts blame to changing requirements. Both are deflection.

- “Tell me about a time a client pushed back on something your team built or proposed. What was the feedback, what did you think when you heard it, and what did you actually change as a result?” Three-part question on purpose. It tests whether they can name a real instance, whether they can be honest about their initial reaction, and whether anything actually changed in how the team works afterward. The strongest answers carry one signal in particular: curiosity. A Tech Lead who treats client pushback as something to understand (“we wanted to know why they saw it that way”) rather than something to defend against will treat your feedback the same way. Marcin’s red flags here: deflecting the feedback onto the client (“once we explained the technical reasoning, they were on board”), a defensive posture you can hear in the framing (“the client wasn’t fully aligned with our approach”), insisting the team was high-performing despite the pushback, or a vague “we adjusted our communication” with no concrete change in process.

- “What’s the technical standard your team will hold? Walk me through your tech checklist.” Look for an actual checklist or a named standard. Marcin’s specific red flag: “the high standard kicks in once we get going.” The standard is either there from day one, or it isn’t.

- “Walk me through what the first 2-4 weeks looked like on a similar project you ran. Not the plan you sold – the actual first month.” Past tense on purpose. A polished 4-week roadmap is easy to fabricate. The lived version of those weeks – what got discovered, what got re-scoped, what slipped – is harder to fake. If they can describe week by week what actually happened (not what was supposed to happen), they’ve shipped before. You are also listening for the process they bring to the table. As Marcin notes: you can just hire a developer yourself; you are hiring a vendor to get proven delivery processes, not just hands to write code. If they default to “we’d start with discovery and set up sprint planning,” they’re describing textbook theory, not a battle-tested process.

How to Interview a Vendor’s Senior Developer

This section is built on input from our five senior developers – the same people who run our internal technical interviews when we hire. They’ve sat on the asking side of this table many times. The patterns below are what they listen for, repurposed for a buyer-side conversation.

The mistake most non-technical buyers make is trying to evaluate code knowledge they can’t evaluate. You don’t need to. The structure of how a senior approaches a problem tells you almost everything.

According to Sebastian, the strongest test is to present the senior with a concrete problem from a real project. Yours, ideally. Then watch what they do. A real senior asks questions first. They want to understand the project’s specifics and constraints before they propose anything. Then, from a high level, they describe the building blocks the system would need, the alternatives for each block, and the trade-offs. Sebastian’s framing: that single 20-minute exchange tells you more than any CV ever will. It tells you whether the years on their CV are hands-on years.

Watch how they structure their thinking:

- Did they ask clarifying questions first?

- Did they ask about the business problem, not just the technical surface?

- Did they list alternatives?

- Did they explain why one option won?

Whether their tech is correct doesn’t matter at this stage – you can’t verify that anyway. What matters more is whether their questions show they’re trying to understand what you’re building, not just how to build it. A senior who only digs into the tech stack will need someone to translate every business decision for them.

Layer four probes on top of the real-problem walkthrough:

- Trade-off reasoning (Paweł). Ask about a recent architectural decision: alternatives, and why this one. Paweł’s tell is the X/Y/Z structure: a real senior says “the options were X, Y, Z. I picked X because A, B, C.” No structure means no decision worth defending.

- Consequence awareness (Mateusz). Ask whether a recent decision held up and what they’d do differently now. Real seniors react to their own decisions over time. People who can’t name what they’d change have probably never owned a decision long enough to find out.

- The why behind tech choices (Mateusz). Pick a technology the team uses heavily and ask them to defend it. Not “because it’s standard.” Actually why. His biggest red flag is “because that’s how it’s done” – the vendor equivalent of “if all you have is a hammer, everything looks like a nail.” You’ll end up paying for a hammer-shaped solution.

- Over-engineering check (Kacper). Ask: “how would you build a simple internal CRUD app for 50 users?” A real senior describes a proportional solution. Someone who proposes microservices, event sourcing, and a custom design system for a 50-user CRUD has a judgment problem in the other direction. Restraint matters as much as the ability to scale.

One behavioral signal runs through all of this: good seniors ask clarifying questions before they recommend anything. Sebastian made this point specifically. Someone who jumps straight to a recommendation is pattern-matching to surface clues. They’re answering a problem they’ve solved before, which may or may not be yours.

A caveat on this whole section: weight what a senior asks and reasons through, not how smoothly they say it. Some excellent senior developers are blunt, hesitant, or just bad at sales-style communication. The signal is the structure of their thinking. Smooth delivery doesn’t add to that, and the lack of it doesn’t subtract.

When to Bring a Technical Advisor Into Vendor Vetting

The probes above work whether you’re technical or not. But there’s a ceiling.

If you have a CTO or a senior tech lead on your side, go deeper. Szymon ranks a 30-minute pair-programming session as the strongest possible signal once you have technical depth in the room. You learn more about how someone thinks in 30 minutes of working through code together than you would in three calls. Pair programming requires that depth though. Without a technical advisor in the room, you can’t read what you’re seeing.

If you don’t have that depth in-house, get it from somewhere. Hire a technical advisor for a few hours. Borrow a CTO friend. Pull in someone from your board who’s lived on the engineering side. We can’t put a number on how much going in without technical backup raises your risk – we don’t have that data. What we can say is that the math is asymmetric: a few hours of senior technical attention is cheap. A wrong vendor caught three months in is not.

Software Vendor Red Flags to Listen For

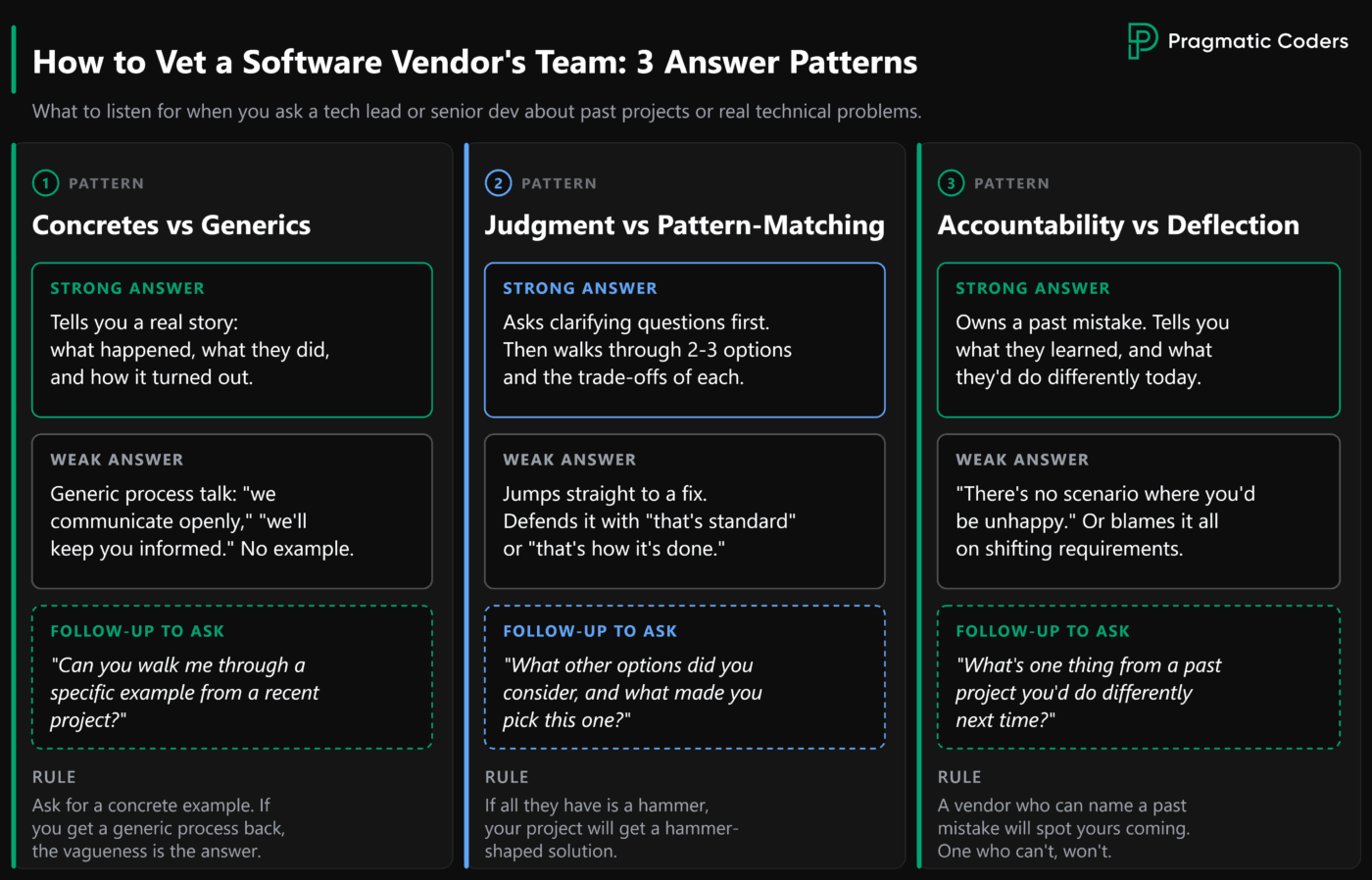

You’ll hear most of these flags during the interviews above. The pattern across all of them is consistent: generics where you expected concretes.

- “Because that’s how it’s done.” Inability to explain a technical decision (Mateusz’s biggest red flag).

- Vague process talk in the first weeks: “we’ll start with sprint sprint events” without a concrete plan (Marcin).

- Quality bar postponed: “the high standard kicks in once we get going” (Marcin).

- Long delivery horizons in early conversations: “the app will be in production in a year” (Marcin).

- Over-engineering reflexes: proposing solutions disproportionate to the problem – microservices and event sourcing for a 50-user internal CRUD, for instance (Kacper).

- Answering before asking. A senior who jumps to a recommendation without clarifying questions is pattern-matching, not thinking (Sebastian).

- “There’s no scenario where you’d be unhappy,” or blame-shifting when asked what could go wrong (Marcin).

- LeetCode-style algorithm trivia as the team’s hiring filter (Szymon, with the caveat that this matters mostly for vendors who base hiring on it).

When you ask for a concrete example and get a generic process description back, the vagueness itself is the answer. It tells you they don’t have a specific example to give you.

Conclusion

You can’t fully verify a vendor’s team in a single call. You can’t even fully verify them in three. But you can move from no signal to a lot of signal in 60 to 90 minutes if you know what to listen for. Years of experience and stack lists are weak signals – they tell you a vendor has shipped before, not whether they can ship your project. Judgment is the stronger one. That’s how the CTO and five senior devs I asked for this article see it.

The team conversation is one of five areas you need to evaluate before signing. For the full set of questions across process, team, pricing, maintenance, and risk, see our guide to questions to ask a software vendor before signing a contract.